Imagine using your phone’s Face ID to unlock it. You glance at the screen, and it recognizes you instantly, whether you are in bright sunlight, wearing glasses, or even after a new haircut.

Now think about self-driving cars. They don’t just follow painted lane markings; they recognize pedestrians, traffic lights, and cyclists weaving through traffic.

How do these systems work so well? The answer is deep learning, one of the most powerful AI technologies ever developed. But what makes deep learning different from older AI? Let’s break it down.

From Fixed Rules to Learning from Examples

Early AI worked like a rigid checklist. If you wanted a computer to recognize a cat, you had to tell it exactly what to look for. A cat has pointy ears. A cat has whiskers. A cat has fur. The problem was that if the cat was lying down, facing sideways, or had unusual markings, the AI failed.

Later, AI improved by learning from trial and error. Systems could adjust themselves based on feedback, slowly correcting mistakes. But they were still limited because they looked for very simple patterns. A stop sign was recognized as a red octagon. If it had graffiti or was bent, the AI might miss it.

Deep learning changed the game. Instead of memorizing patterns or following hand-written rules, it learns directly from massive amounts of data. This shift made AI far more flexible and reliable.

The Assembly Line of Layers

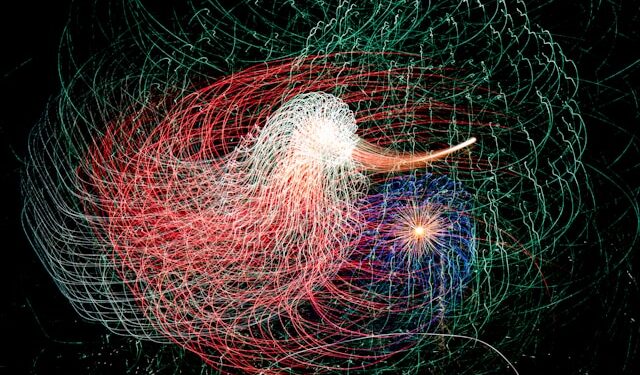

What makes deep learning special is its layered approach. Information passes through many stages, each one adding a little more understanding.

Think of it like a high-tech assembly line.

At the first layers, the system detects very simple patterns such as edges, curves, or colors. In the middle layers, it begins to recognize features like a nose in a face or a wheel in a car. By the deepest layers, it combines everything to recognize complex objects, such as an entire face or distinguishing between a pedestrian and a stop sign.

This step-by-step refinement is why deep learning handles messy handwriting, blurry photos, and unpredictable real-world scenarios better than older AI methods.

Everyday Applications

Deep learning now powers many services people use daily.

In smartphones, it drives Face ID and facial recognition. Older AI measured distances between eyes and noses and failed if you wore glasses or grew a beard. Deep learning, trained on millions of faces, still recognizes you through changes in lighting, hairstyle, or accessories.

In self-driving cars, old AI followed lane markings and failed in poor weather. Deep learning trains on millions of hours of driving data, enabling the system to recognize roads even with faded lines and to react to sudden events, like a cyclist swerving onto the street.

Beyond these, deep learning powers medical diagnosis, language translation, online recommendations, and even the generation of realistic images.

Challenges of Deep Learning

Despite its success, deep learning is not perfect.

It requires massive computing power, often using specialized hardware and enormous energy. It is difficult to understand, often described as a “black box” because we cannot easily explain why it made a certain decision. This lack of transparency is a major issue in fields like healthcare, finance, and law enforcement. It can also be tricked. A small, carefully crafted change to an image might cause a model to misclassify a cat as a dog.

The Road Ahead: Beyond Pattern Recognition

Deep learning excels at recognizing patterns but struggles with common sense and reasoning. A child knows that ice cream will melt if placed in the oven, yet a deep learning model may not understand unless it has seen labeled examples of melting ice cream.

A self-driving car might handle millions of traffic scenarios but still struggle with unusual events, like a flooded road. A human, even without prior experience, reasons that driving through deep water is dangerous. AI lacks this type of flexible reasoning.

The future of AI lies in going beyond pattern recognition. Researchers are working on systems that combine deep learning with logic, reasoning, and explainability. The goal is not just to make bigger models with more data but to build AI that can understand, adapt, and explain itself in ways closer to human intelligence.

A Note on Deep Learning Algorithms

Different kinds of deep learning models are used depending on the problem. You don’t need the math, just the big picture

- Convolutional models: Excellent for working with images and video. They power facial recognition, medical scans, and self-driving car vision.

- Recurrent models: Designed for sequences such as text, speech, or stock market data. They “remember” information over time.

- Transformers: The breakthrough architecture behind today’s most powerful AI, including language models like GPT. They process data in parallel, making them faster and better at handling context.

- Multimodal models: The newest generation. These systems can work across different inputs. For example, reading text while analyzing images or sound.

These names matter less than the idea: deep learning adapts to many domains by using the right kind of architecture for the job.

Conclusion: A Powerful Tool, Not the Final Answer

Deep learning has changed the way machines see, hear, and interpret the world. It powers breakthroughs in safety, healthcare, communication, and entertainment. But it remains a tool, not an all-knowing brain. It cannot yet reason, explain itself fully, or apply common sense the way humans do.