For more than half a century, robotics has promised to bring intelligence into motion. From the first experimental machines at Stanford and MIT to the humanoid marvels of Boston Dynamics, engineers have imagined robots that would one day walk, work, and perhaps even think like us. Each decade brought a new demonstration: smoother motion, faster reflexes, finer precision. Robots now run, jump, flip, and dance in viral videos that captivate the public imagination.

And yet, behind the choreography lies a simple truth. Nothing inside these machines understands what it is doing. A robot can balance on one leg and perform acrobatics that would exhaust an athlete, but it does not know what balance means or why movement matters. It reacts to sensors and algorithms, not to experience or intention.

Fifty years of movement without mind.

From Shakey, the first mobile robot built in the late 1960s, to today’s quadrupeds and humanoids, robotics has advanced spectacularly in control but not in comprehension. Shakey could map a room and push a box. Boston Dynamics’ Atlas can sprint across rough terrain and leap onto platforms. The difference in performance is enormous, yet the underlying limitation remains: neither understands what a room or a box is. Progress in motion has not become progress in meaning.

From Shakey to Boston Dynamics

Shakey was the first robot able to perceive its surroundings through sensors and act on them according to a simple plan. It was revolutionary in its time because it combined computer vision, logical reasoning, and mechanical action. Researchers saw it as the beginning of artificial understanding. But what they built was not a thinker; it was an executor. Every action it performed was defined in advance by human designers, who translated perception into symbols the machine could manipulate.

Half a century later, Boston Dynamics’ robots embody the opposite philosophy. They do not reason symbolically; they rely on complex control systems, dynamics models, and learned reflexes. They perform stunning feats of coordination: backflips, obstacle runs, synchronized dances. Yet these performances are still products of control, not cognition. The robot’s grace is mathematical, not mental.

The irony is striking. The more agile machines become, the more we mistake agility for awareness. A robot’s ability to imitate the body deceives us into imagining it has also inherited the mind. But motion, no matter how fluid, is not thought.

They move like us, but they do not know us.

Why Robots Dance Beautifully but Still Cannot Think

When Boston Dynamics released a video of its robots dancing to music, millions of viewers felt awe and unease. The precision of the choreography seemed almost human. But behind the rhythm was a vast array of pre-programmed motions, feedback loops, and control parameters. The robots were not dancing because they enjoyed the song. They were executing carefully planned trajectories computed in advance.

The distinction is crucial. To dance is to interpret rhythm through feeling and intention. It is to inhabit time, to express awareness through motion. Robots do none of this. Their elegance is an illusion born of precision. We project meaning into their gestures because they resemble ours, just as early viewers of automata in the eighteenth century imagined hidden souls behind clockwork dolls.

Modern robotics has perfected imitation, not imagination. What we witness is not creativity but compliance. A robot does not decide what it wants to do; it performs what we design it to perform. It does not improvise; it executes.

Machines can execute, but only humans can improvise.

The Myth of Autonomy

The most seductive idea in robotics is autonomy. Engineers speak of self-driving cars, self-navigating drones, and self-learning machines. But autonomy in machines is a misnomer. What appears to be self-direction is in fact the extension of invisible human labor. Behind every “autonomous” system lie millions of lines of code, labeled data, and design choices that define how the machine perceives and reacts.

A car that “drives itself” is not making independent decisions; it is following statistical models trained on human driving patterns. When conditions deviate from the training set such as fog, construction, or moral dilemmas, the illusion collapses. True autonomy requires not just reaction but understanding, the ability to interpret ambiguity, infer intention, and adapt to moral context. Robots have none of these capacities.

Autonomy without comprehension is not freedom. It is automation. We call it self-governing only because we no longer see the humans behind the code. The myth of autonomy disguises dependence.

In practice, so-called autonomous vehicles rely on a layered architecture that combines sensor fusion, mapping, and probabilistic planning. Cameras, radar, and LiDAR feed data into convolutional or transformer-based networks that classify objects and estimate motion. A separate decision layer translates those perceptions into steering and braking commands using algorithms such as Model Predictive Control or reinforcement-learning policies. None of these modules understands what a pedestrian is; they operate on probability distributions and vector fields. When visibility drops or a situation falls outside the training data, the entire system reveals its dependence on human design rather than genuine self-direction.

Automation pretends to be intelligence.

Control Versus Understanding

In robotics, success is measured by control: how precisely a machine can follow a path, balance on a leg, or grasp an object. Every improvement in sensors and actuators enhances that control. But control is not understanding. To control is to predict and regulate. To understand is to interpret and choose.

Most robotic motion today is governed by feedback control. Systems such as PID controllers, inverse kinematics solvers, or trajectory-optimization loops continuously minimize error between desired and measured positions. Deep reinforcement learning extends this by letting networks adjust control policies through trial and reward, as in robot arms trained in simulation to stack blocks or open doors. Yet even these learning-based systems remain reactive. They optimize functions, not intentions. The robot achieves stability and precision but does not interpret why an action matters. Control theory perfects movement; cognition gives it meaning.

When a robotic arm picks up a cup, it does so by calculating geometry and force. When a human does the same, they anticipate weight, texture, fragility, and purpose. The difference is not in accuracy but in awareness. The robot manipulates; the human perceives.

This distinction defines the boundary between artificial movement and living intelligence. The former operates on feedback; the latter operates on meaning. Robotics, for all its progress, remains on the mechanical side of that divide.

The Illusion of Simple Tasks

We often assume that the simplest human tasks should be the easiest to automate. If a robot can leap across platforms, surely it can make a pizza, pour a cup of coffee, or sew a shirt. Yet each attempt to mechanize such everyday gestures exposes how much hidden intelligence ordinary actions contain.

Consider Zume Pizza, a Silicon Valley startup that once promised to revolutionize food preparation. Its founders imagined robotic arms spreading tomato sauce, sliding pizzas into ovens, and slicing them with machine precision. Backed by hundreds of millions in venture funding, Zume built automated kitchens capable of producing more than a hundred pizzas per hour inside mobile trucks. In theory, it was a marvel of coordination: sensors, conveyors, and algorithms all synchronized in pursuit of efficiency. In practice, it failed. The system could not adapt to the countless small variations that human cooks handle effortlessly — uneven dough, missing toppings, fluctuating oven temperatures. Zume’s robots could follow instructions, but they could not improvise. By 2023, the company that once symbolized the future of food automation had quietly shut down.

The same story repeats across domains. Decades after vending machines appeared, we still do not have a truly robotic café. A human barista does more than toggle switches and pour liquids. They adjust to cup size, temperature, spillage, and flow rate with intuitive ease. Even a child instinctively knows when to stop filling a glass before it overflows. A robot does not. It must be told exactly how long to open a valve and how far to move its arm. Change the cup or the tap, and it fails. Machines can reproduce motion, but they cannot understand context. They lack the silent awareness of why an action is being performed.

Walmart discovered a similar truth when it deployed autonomous robots to scan shelves for missing inventory. After three years of trials, the project was abandoned. Human workers, equipped with handheld scanners and practical judgment, proved faster and more reliable. What seems simple — recognizing an empty shelf — turns out to depend on perception, context, and improvisation.

Even textile manufacturing, long assumed ripe for full automation, resists the dream. Sewing fabric requires constant tactile feedback. A garment worker senses tension, stretch, and softness through touch, adjusting every fraction of a second. Machines cannot yet replicate that sensory intelligence. The cloth slips, folds, or tears, confusing the algorithm. The result is that one of humanity’s oldest crafts remains among the least automated.

These failures reveal something profound. The difficulty of automating “simple” human tasks is not mechanical but cognitive. Each action carries embedded understanding, a feel for texture, timing, resistance, and meaning that no algorithm captures. What looks trivial from the outside hides immense perceptual and experiential depth.

The problem is not the sophistication of our machines but the poverty of their perception. What we call simplicity in human behavior is built on layers of sensory intelligence. Before a machine can think, it would first have to touch.

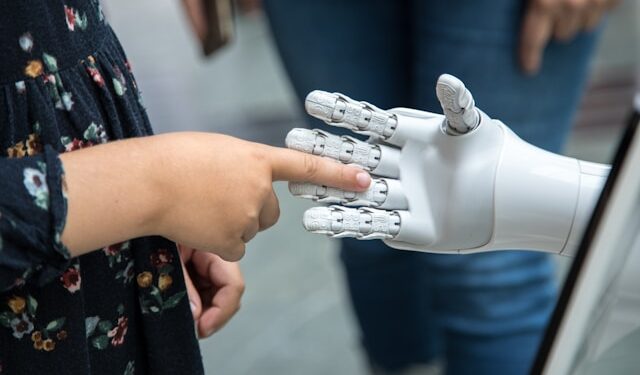

The Hand and the Mind

Every child knows what a robot cannot: that the world is not a collection of coordinates but a field of sensations. The human hand is the most extraordinary bridge between matter and meaning ever evolved. It can hold a feather without crushing it, a hammer without hesitation, a loved one’s hand without calculation. It measures the world not only by pressure and temperature but by intention. Through touch we learn weight, resistance, fragility, and form. Through gesture we translate thought into action.

For half a century, roboticists have tried to reproduce that miracle. The results remain crude approximations. Even the most advanced robotic hands, armed with tactile sensors and adaptive grips, cannot match the silent intelligence of flesh. They can pick up an apple under laboratory lighting but fail when the fruit is slightly bruised, wet, or irregular. They can tighten a screw but cannot sense when force turns to fracture. They can repeat what they are told but cannot improvise what they do not expect.

The Shadow Hand and the DLR Hand II, for instance, use dozens of actuators and pressure sensors to approximate human dexterity. OpenAI’s Dactyl system trained a robotic hand to solve a Rubik’s cube by reinforcement learning, running millions of simulated grasps before performing the task in the real world. These achievements highlight mechanical precision but also their fragility: a shift in lighting or friction can derail performance. Engineers replicate structure, not sensation. The data streams flowing through these hands are mathematical surrogates for feeling, never its equivalent.

A hand is not a mechanism; it is a mind extended into matter. It does not compute how to grasp; it feels. Each movement carries memory of pain, habit, experience, and empathy. When a human surgeon guides a scalpel or an artist shapes clay, the hand is thinking as much as the brain. It adjusts not by formula but by awareness. The robot, in contrast, follows thresholds and control loops. It has coordination without comprehension.

The miracle of motion is not speed but sense.

The Danger of Confusing Precision with Perception

Modern robots impress us because they achieve extreme precision. They can weld with sub-millimeter accuracy, assemble microchips, or suture tissue. The spectacle of precision creates an aura of intelligence. But precision and perception are not the same. A system may act flawlessly within narrow parameters yet remain blind to what lies beyond them.

Perception involves ambiguity, context, and value, the ability to see not just what is but what it means. A robot that paints a wall does not see color; it follows coordinates. A robot that diagnoses medical images does not perceive illness; it recognizes patterns.

This distinction is clear in machine-vision systems. Convolutional neural networks trained on labeled X-rays or MRI scans learn statistical correlations between pixel clusters and diagnostic categories. They excel at pattern recognition within familiar data but fail when lighting, orientation, or equipment differ from the training set. Perception, in a biological sense, involves integrating vision with memory, context, and expectation. For AI, vision is matrix multiplication. The network maps inputs to outputs; it does not experience what a lung or bone is.

When precision replaces perception, efficiency replaces empathy. We begin to mistake technical success for intellectual progress. The danger is philosophical as much as practical. The more perfect our tools become, the less we question what perfection means.

Robotics reveals the poverty of AI imagination.

When Automation Pretends to Be Intelligence

The field of robotics was once about creating machines that could perceive, reason, and act. Today, it has largely merged with machine learning, where success depends on data rather than design. Instead of building understanding, we build correlation. Robots learn by statistical exposure, not by insight. They become experts at repetition, not reasoning.

This shift has produced extraordinary functionality but little conceptual growth. The robot that delivers a package, flips a burger, or folds laundry is a triumph of engineering but not of thought. It knows nothing of purpose, value, or meaning. Automation masquerades as intelligence because we measure progress by performance rather than comprehension.

The imagination of AI has become mechanical. We dream not of understanding but of replacement, not of wisdom but of efficiency. Robotics shows us this clearly: the dream of thinking machines has narrowed into the pursuit of obedient ones.

Robots still live in the shadow of science fiction.

The Shadow of Fiction

Our expectations of robotics are not scientific but cinematic. From Metropolis to Blade Runner to Ex Machina, culture has taught us to associate human-shaped machines with consciousness. The fantasy of the robot is the fantasy of ourselves reborn in metal, tireless, emotionless, immortal.

Real robotics, however, exists far from that mythology. Robots do not crave freedom, love, or power. They are not trapped souls waiting to awaken. They are mechanical systems obeying physical laws. Yet the cultural shadow of science fiction continues to shape how we interpret their progress. Each new advancement in locomotion or gesture is hailed as a step toward sentience.

This narrative obscures reality. The humanoid robot does not prove intelligence; it flatters it. It reflects our desire to see ourselves in our machines. The illusion of progress in robotics is sustained by the confusion between simulation and substance.

Smarter tools, shallower minds.

The Paradox of Progress

The more sophisticated our robots become, the less we seem to understand ourselves. We measure success by technical performance while neglecting philosophical purpose. The robot’s perfection becomes a mirror for our own diminishing curiosity.

In factories, warehouses, and hospitals, automation increases productivity but narrows human involvement. Tasks once requiring judgment are reduced to supervision. The machine learns to execute, and the human learns to watch. The paradox is that progress in robotics often produces regression in reflection.

The promise of robotics was to liberate humanity from repetitive labor so we could devote ourselves to creativity and meaning. Instead, it risks making us spectators of our own displacement. The danger is not mechanical rebellion but moral resignation, a quiet erosion of agency under the comfort of efficiency.

We build machines that move flawlessly but think not at all, while we ourselves move less and think less deeply. The illusion of progress hides a deeper stagnation, the loss of purpose in the pursuit of perfection.

Beyond the Illusion

True progress in robotics will not come from faster processors or stronger servos but from reimagining what intelligence means. Machines will continue to excel at control, precision, and endurance. They will surpass humans in accuracy and consistency. But they will not create meaning or feel wonder. Those capacities belong to consciousness, not computation.

The challenge ahead is not to make robots more human, but to make humans more aware of what humanity entails. The difference between us and our machines is not diminishing; it is deepening. Every improvement in robotics reminds us that intelligence without understanding is emptiness in motion.

The future of robotics should not be measured by autonomy but by alignment, not how far machines can act alone, but how well they can serve human intention. The goal is not imitation but collaboration, tools that extend our imagination, not replace it.

Current research in embodied AI and soft robotics points toward that collaboration. Instead of rigid machines programmed for narrow tasks, scientists design adaptive materials and bio-inspired control loops that let robots learn through interaction. Soft robotic grippers change shape in response to pressure, while neuromorphic chips attempt to emulate the brain’s event-driven computation for energy efficiency. These directions suggest that progress will come not from scaling computation but from integrating perception, memory, and action in a single embodied loop, the very pattern that evolution discovered in the hand and mind.

The illusion of progress fades when we remember that understanding cannot be automated. Movement without mind is spectacle, not thought. The next frontier is not to make robots think like us, but to think more clearly about why we build them at all.

After all the demonstrations and headlines, the essence of robotics remains unchanged: machines execute, humans interpret. Robots act, humans understand. The miracle of motion that once inspired science fiction has become ordinary, but the miracle of consciousness remains unexplained.

Progress in robotics is real and remarkable, but it is not the progress of understanding. The dance of machines delights the eye, yet it does not move the mind. The illusion of progress ends where meaning begins.

The question is no longer whether robots will think, but whether humans will continue to.