Anxious over false flags from AI-detection software, U.S. college students are adopting countermeasures—ironically, many powered by AI. A cottage industry of “humanizer” tools promises to rewrite prose to evade detectors, while platforms such as Grammarly’s Authorship log keystrokes and revision histories so students can prove they wrote their work. Educators report rising labor costs to investigate cases and blurred norms about acceptable AI use, even as companies like Turnitin and GPTZero roll out upgraded detection and caution schools not to treat scores as proof.

The standoff underscores a broader shift: AI is now embedded across classroom tools, enforcement remains imperfect, and non-native English speakers face higher risks of being misidentified. With lawsuits mounting and pressure growing for clearer rules, universities are weighing surveillance-heavy verification against policy reforms and assessment redesigns, while some experts call for government action to regulate the academic cheating ecosystem.

Related article:

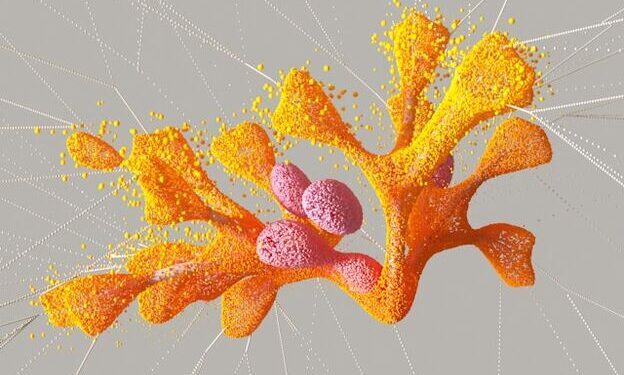

DetectGPT: Zero‑Shot Machine‑Generated Text Detection using Probability Curvature