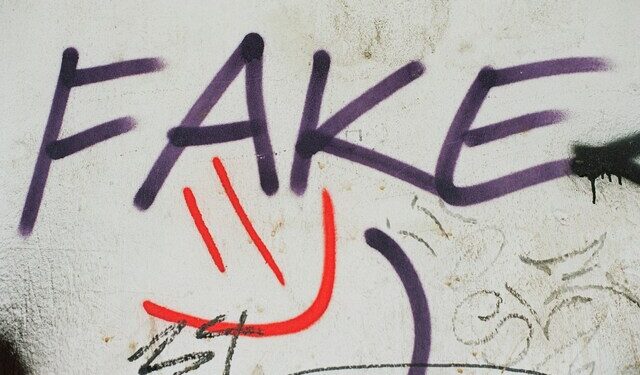

The article explores how, during the recent conflict between Iran and Israel, millions of people turned to AI chatbots such as Grok, ChatGPT, and Gemini for real-time verification of images and videos circulating online. However, these chatbots provided inconsistent, often unreliable answers, further obscuring the truth. The surge of increasingly sophisticated AI-generated videos and images is making it easier for misinformation to spread and harder for the public to discern facts. Experts discussed the “liar’s dividend”—how doubts over authenticity now pervade even legitimate content—warning that generative AI has turbocharged influence campaigns and state-sponsored propaganda. Researchers urge caution and emphasize the limitations of current AI systems for fact-checking, noting that these technologies often reinforce users’ existing beliefs and contribute to an unstable information environment during critical events.

As Iran and Israel Clashed, People Sought Truth from AI—And Came Up Short

Copyright © 2025 AI Business Journal